How my algorithm accidentally became O(n!)

Advent of Code is a yearly event where you solve a series of programming puzzles. The puzzles are released daily and you can solve them in any language you like. I like to do them (until I give up after a few days), and this year is no different .

This year’s day four puzzle was interesting, and - that’s why I’m writing this blogpost - it becomes a good example of how sometimes your algorithms perform significantly worse than you expect them to.

The puzzle

Let’s first look at the puzzle. Effectively, you’re given a list of Scratchards. Each scratchcard can win other scratchcards. The cards are ordered,

and you always win the cards behind it. In other words: Suppose your looking at card n, which wins 5 other cards. You can then add cards n + 1,

n + 2, n + 3, n + 4 and n + 5 to your collection.

You’re tasked with finding the total amount of cards you’ve won.

First attempt: Naive solution

In my code, I started out with this 1 :

cards = [

(0, 4), # Card 0 wins 4 other cards

(1, 0), # Card 1 wins 0 other card

(2, 3), # Card 2 wins 3 other cards

# ...

]I then wrote a function that goes through all cards:

cards_stack = [card for card in cards]

for index, num_won in cards_stack:

cards_stack.extend(cards[index + 1:index + num_won + 1])We can then simply count the amount of cards in the stack:

print(len(cards_stack))I tried it out with the example on the page and sure enough; it ran in a few milliseconds. Then I tried running it with the actual input. It took about 50 seconds. 50 seconds might not sound that bad, but it’s stunning for such a puzzle.

So why does this happen?

Big O notation

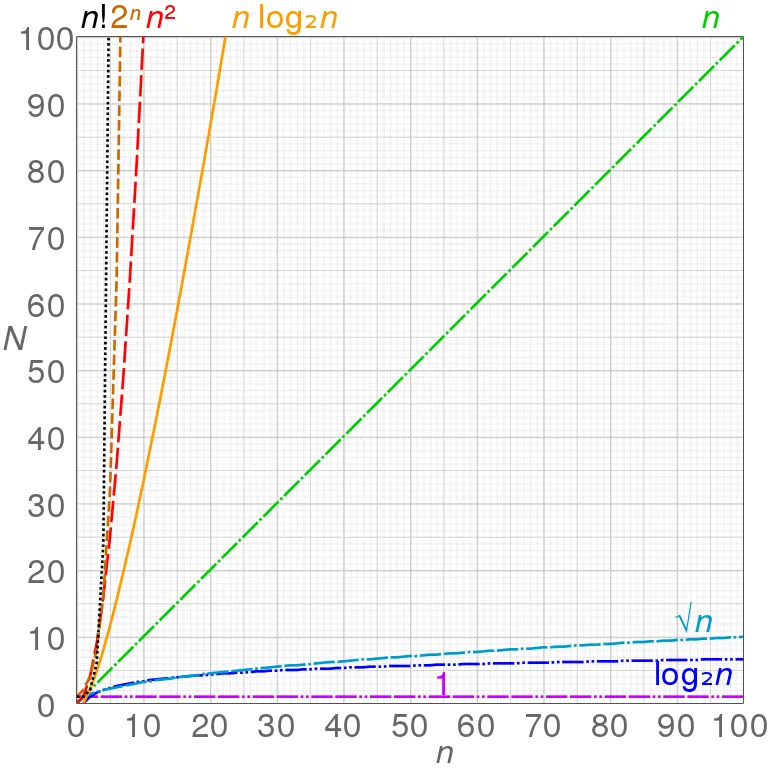

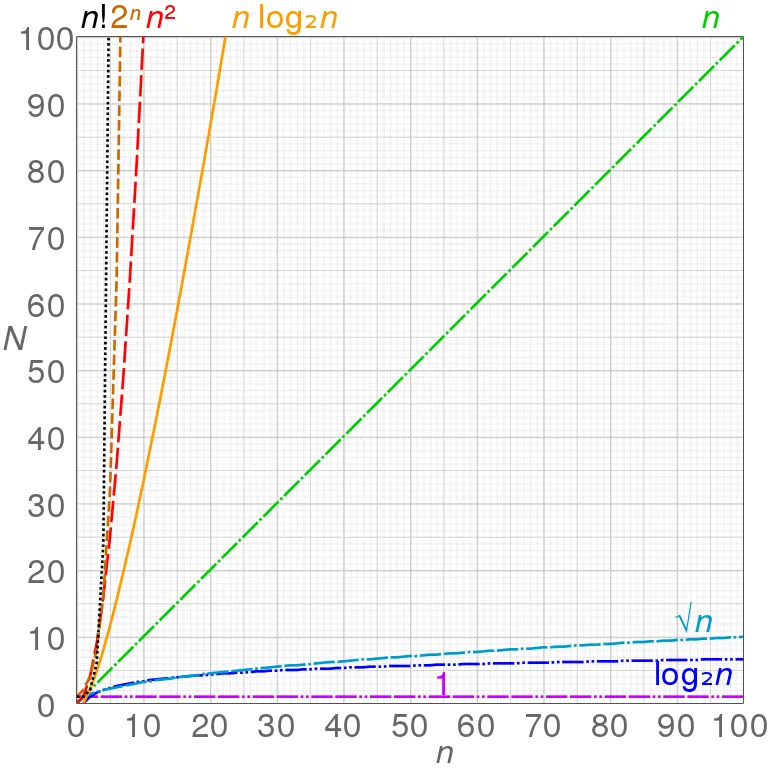

The Big O notation is a way to describe the performance of an algorithm. It gives an upper bound on the runtime of an algorithm on the size of its input. We write it like this: . The function is the upper bound on the runtime of the algorithm. Common Big Os are:

-

: The algorithm runs in constant time. This means that the runtime of the algorithm is independent of the size of the input.

-

: The algorithm runs in linear time. This means that the runtime of the algorithm is proportional to the size of the input. Intuitvely, one would need to look at every element in the input.

A good example is searching for an element in an (unsorted) array. You need to look at every element in the array to find the element you’re looking for.

-

: The algorithm runs in quadratic time. This means that the runtime of the algorithm is proportional to the square of the size of its input.

A good example is the bubble sort algorithm. It compares every element in the array with every other element in the array.

-

(pronounced “n log n”): The algorithm runs in linearithmic time. This means that the runtime of the algorithm is proportional to the size of the input times the logarithm of the size of the input.

A good example is the merge sort algorithm. It splits the array in half, sorts the two halves, and then merges them together. The runtime of the algorithm is because the array is split in half times, and each split takes time.

-

. The algorithm runs in logarithmic time. This means that the runtime of the algorithm is proportional to the logarithm of the size of the input.

A good example is the binary search algorithm. It splits the array in half, and then searches the half that contains the element you’re looking for. It then splits that half in half, and so on. The runtime of the algorithm is because the array is split in half times.

Careful with Big O

Note that Big O gives an asymptotic approximation of the runtime. As the size of the input grows towards infinity, the runtime of the algorithm is bounded by the function inside the O.

This explains why : If an algorithm grows at most linearlly, it will trivially also grow at most quadratically.

However, if an algorithm is in , this doesn’t mean it will always be slower than an algorithm in . Let’s call the algorithm in (quadratic), and the algorithm in (linear). Let’s assume takes 2 second to run on an input of size 10 and takes 10 seconds to run on an input of size 10.

If we now double the input size to 20, will take 4 8 seconds to run 2 , and will take 20 seconds to run. So is faster than .

When talking about Big O notation, always remember that we’re talking about the asymptotic behaviour of an algorithm.

Let’s look at how different functions grow:

It’s easy to see that .

So why is the algorithm ?

Consider this: Suppose you have 100 cards. The first card wins 99 cards, the second one wins 98 cards, and so on. The last card wins 0 cards (basically each card wins all others behind it). The algorithm will look at the first card, and add 99 cards to the stack. Each of the added cards will add 98 cards to the stack. Those in turn will each add 97 cards to the stack.

Having realized this, what’s a better way to solve the puzzle?

Memoization avoids unnecessary computations

When we take a step back, we can actually see that there is a different way to express our algorithm:

cards = [

(0, 4), # Card 0 wins 4 other cards

(1, 0), # Card 1 wins 0 other card

(2, 3), # Card 2 wins 3 other cards

# ...

]

def get_num_cards_won(card_index):

ret = cards[card_index][1] # This card wins this many other cards

for index, num_won in cards[card_index + 1:card_index + num_won + 1]:

ret += get_num_cards_won(index) # The cards it won, win this many other cards themselves

return retThis function is recursive, in other words: It calls itself. Moreover, the function is pure: It doesn’t have any side effects. Side effects are actions that change the state of the program (setting a variable, printing to the console, etc.). The nice thing about pure functions is that for a given input they will always return the same output .

We can exploit this behavior to avoid unnecessary computations. Instead of actually calling the function, we can store the result of a function call, and only compute it, if it’s missing 3 :

cache = {}

def get_num_cards_won(card_index):

if card_index in cache: # If we've already done the calculation, just use that

return cache[card_index]

ret = cards[card_index][1] # This card wins this many other cards

for index, num_won in cards[card_index + 1:card_index + num_won + 1]:

ret += get_num_cards_won(index) # The cards it won, win this many other cards themselves

cache[card_index] = ret

return retThis is called memoization. We check if our cache contains the result of the function call for a given input, and only do the work if absolutely necessary.

Exercise for the reader

Fibonacci numbers are a famous example of a recursive function. The -th Fibonacci number is defined as , with and . You can try implementing a function that computes the -th Fibonacci number and which uses memoization.

This approach drastically decreases the amount of calculations we need to do: Because for each card we calculate the result only once, we only need to look at each card once. This means that our algorithm is now .

Benchmarks

My solution includes a tiny benchmark that compares the two solutions. It

uses time.perfcounter to measure the time each solution takes to run. I ran it on my machine (Ryzen R9 7950X, 64GB RAM 4 ) and got the following

results:

Part 2 (naive): (50.370501 seconds)

Part 2 (with memoization): (0.0506474 seconds)That’s an improvement by 99.9%!

Closing remarks

This only scratches the surface of algorithms and Big O notation: Typically when analyzing algorithms, we’re interested in best case, average and worst case runtime complexity 5 . We can also look at the ammortized runtime complexity of an algorithm. Moreover, we haven’t considered space complexity yet.

Lastly, Python has the functools.cache decorator to do memoization.

Footnotes

-

Don’t judge this too hard, this is mainly for illustrative purposes. My actual code can be found here . ↩

-

There was an error in the previous version of the article. It has been fixed now. ↩

-

This is a very naive implementation of memoization. A better implementation would be to use a dictionary that maps the input to the output, and then use a decorator to memoize the function. I didn’t do this because I wanted to keep the code as simple as possible. Also our function isn’t technically pure anymore because it has side effects (it mutates the cache). ↩

-

Not that it matters that much. ↩

-

For example, for sorting, the best case is an already sorted array and the worst case is an array sorted in reverse order. ↩